What are An AI Agents

AI agents defined As

An artificial intelligence (AI) agent is a system that can independently carry out tasks by creating and following workflows using the tools it has available.

These agents go far beyond simple language processing. They are capable of making decisions, solving problems, interacting with external systems, and taking actions based on real-time inputs.

AI agents are widely used to handle complex tasks in business environments, such as software development, IT automation, code generation, and conversational support. By leveraging advanced natural language capabilities of large language models (LLMs), they can understand user requests step by step, generate appropriate responses, and decide when to use external tools to complete a task effectively.

Key Features of an AI Agent

As discussed earlier, the foundation of an AI agent lies in its ability to reason and act, often described through frameworks like ReAct. However, modern AI agents have evolved to include a broader set of capabilities that make them more intelligent and adaptable.

Reasoning

This is the core thinking ability of an AI agent. It involves analyzing information, identifying patterns, and drawing logical conclusions. Strong reasoning allows agents to make informed decisions based on data, context, and evidence.

Acting

AI agents must be able to take action based on their decisions. These actions can be physical, in the case of robotics, or digital such as sending messages, updating systems, or triggering automated processes to achieve a goal.

Observing

To make accurate decisions, agents need to understand their environment. This is done by collecting and interpreting data through methods like natural language processing, computer vision, or sensor inputs.

Planning

Planning enables an AI agent to map out steps needed to reach a goal. It involves evaluating different options, predicting possible outcomes, and selecting the most effective path while considering potential challenges.

Collaborating

In many scenarios, AI agents work alongside humans or other agents. Effective collaboration requires communication, coordination, and the ability to align with shared objectives in dynamic environments.

Self-Refining

Advanced AI agents continuously improve themselves. By learning from past experiences and feedback, they adjust their behavior, enhance performance, and become more efficient over time using techniques like machine learning and optimization.

What is the difference between AI agents, and AI bots and artificial assistants?

AI systems come in different forms depending on how autonomous and capable they are. While the terms are sometimes used interchangeably, there are clear differences between AI agents, AI assistants, and bots.

AI Assistants

AI assistants are a type of AI agent designed to work closely with users. They understand natural language, respond to requests, and help complete tasks step by step. These assistants are often built into apps or platforms and interact continuously with users during a task. While they can suggest actions and provide insights, the final decisions are usually made by the user.

AI Agents vs AI Assistants vs Bots

Purpose

AI Agents: Operate independently to complete tasks and achieve goals

AI Assistants: Help users perform tasks through guidance and interaction

Bots: Automate basic tasks or simple conversations

Capabilities

AI Agents: Handle complex, multi-step processes, learn over time, and make decisions on their own

AI Assistants: Respond to prompts, provide information, and assist with simpler actions while relying on user input

Bots: Follow fixed rules, offer limited functionality, and support basic interactions

Interaction Style

AI Agents: Proactive and goal-driven

AI Assistants: Reactive and user-guided

Bots: Reactive and trigger-based

Key Differences

Autonomy

AI agents have the highest level of independence, as they can plan and act without constant input. AI assistants require user involvement, while bots operate strictly within predefined instructions.

Complexity

AI agents are built for complex workflows and advanced problem-solving. AI assistants and bots are better suited for simpler tasks and straightforward interactions.

Learning Ability

AI agents typically improve over time using machine learning and feedback. AI assistants may have limited learning capabilities, while bots generally do not learn and rely on fixed programming.

How DO AI agents work

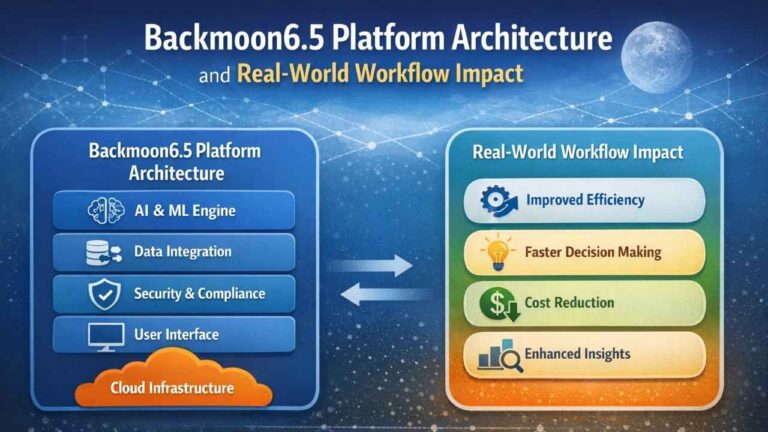

At the heart of most AI agents are large language models (LLMs), which is why they are often called LLM-based agents. Traditional LLMs generate responses based only on their training data, meaning they can be limited by outdated information and fixed reasoning capabilities. In contrast, modern agent-based systems enhance this by using tool integration in the background to access real-time data, streamline workflows, and break complex objectives into smaller, manageable tasks.

As these systems interact with users, they gradually learn and adjust to preferences over time. By storing previous interactions and using that memory to guide future actions, AI agents can deliver more personalized and accurate responses.

Another key advantage is that many of these processes, including tool usage, can happen automatically without human involvement. This significantly expands the real-world potential of AI agents. Typically, their functionality is structured around three core stages or components that define how they operate:

Goal Initialization and Planning

While AI agents can make decisions on their own, they still rely on goals and guidelines set by humans. Their behavior is mainly shaped by three key factors:

- The developers who design and train the AI system

- The team that deploys the agent and makes it accessible to users

- The end user who defines the task, sets objectives, and determines which tools the agent can use

Once a goal is provided, the AI agent evaluates the available tools and breaks the objective into smaller, manageable steps. This process, known as task decomposition, helps improve efficiency and accuracy when handling complex tasks.

For simpler tasks, detailed planning may not be required. In such cases, the agent can refine its responses step by step, learning and improving without creating a full plan in advance.

Reasoning With Available Tools

AI agents make decisions based on the information they can access, but they do not always have everything needed to complete every part of a complex task. To fill in those gaps, they use available tools such as external databases, web searches, APIs, and even other specialized agents.

Once new information is collected, the agent updates its understanding and begins a reasoning process that helps it evaluate the task more effectively. This includes reviewing its current approach, adjusting its plan, and correcting itself when needed. As a result, the agent can make smarter and more flexible decisions.

A simple example is vacation planning. Imagine a user asks an AI agent to predict which week next year would offer the best weather for a surfing trip to Greece.

Since the language model behind the agent is not an expert in weather forecasting, it cannot depend only on its built-in knowledge. Instead, it retrieves historical weather data for Greece from an external source, such as a database with daily weather reports from previous years.

Even with that information, the agent may still not know what weather conditions are ideal for surfing. So it creates another subtask and consults a different tool or specialized agent focused on surfing knowledge. From that source, it may learn that the best surfing conditions usually include high tides, sunny skies, and little to no rain.

With both sets of information, the agent can now connect the patterns and make a useful prediction. It can identify the week most likely to offer high tides, clear weather, and a low chance of rain in Greece, then present that recommendation to the user. This ability to combine knowledge from multiple tools is what makes AI agents more capable and versatile than traditional AI systems.

Learning and Reflection

AI agents continuously improve their performance through feedback mechanisms, including input from other agents and human-in-the-loop (HITL) guidance. Revisiting the surfing example, once the agent delivers its recommendation, it saves both the information it gathered and the user’s feedback. This helps the system refine its future responses and better match user preferences over time.

When multiple agents are involved, they can also exchange feedback with each other. This collaborative input often reduces the need for constant human intervention and speeds up the overall process. At the same time, users can still provide feedback during different stages of the agent’s actions to ensure the results align with their expectations.

This ongoing cycle of improvement is known as iterative refinement, where the agent gradually enhances its reasoning and accuracy. Additionally, AI agents can store past solutions and challenges in a knowledge base, allowing them to avoid repeating mistakes and handle similar tasks more effectively in the future.

Agentic vs Non-Agentic AI Chatbots

AI chatbots rely on conversational technologies like natural language processing (NLP) to understand user queries and generate responses. While chatbots represent a type of interface or application, “agency” refers to the underlying capability that enables systems to act more independently and intelligently.

Non-agentic chatbots operate without tools, memory, or advanced reasoning abilities. They are limited to short-term interactions and cannot plan ahead. These systems depend heavily on continuous user input and typically respond only to direct prompts without deeper context.

Although they can handle common or predictable questions reasonably well, they struggle with personalized or complex queries. Since they lack memory, they cannot retain past interactions or learn from previous mistakes, which limits their ability to improve over time.

In contrast, agentic chatbots are designed to evolve with user interactions. They can remember past conversations, adapt to preferences, and provide more tailored and in-depth responses. These systems are capable of breaking down complex tasks into smaller steps, executing them independently, and adjusting their approach when needed. By evaluating and using available tools, agentic chatbots can also fill in knowledge gaps and deliver more accurate and useful outcomes.

Types of AI Agents

AI agents can be designed with different levels of complexity depending on the task they are meant to handle. For simple objectives, a basic agent is often more efficient and avoids unnecessary computational effort. Broadly, AI agents can be categorized into five types, ranging from the most basic to the most advanced.

1. Simple Reflex Agents

Simple reflex agents are the most basic form of AI agents. They make decisions purely based on current input or perception, without using memory or interacting with other systems. Their behavior follows predefined rules, meaning they respond to specific conditions with fixed actions.

Because of this rule-based approach, these agents cannot handle situations they were not explicitly programmed for. They work best in fully observable environments where all necessary information is readily available.

Example:

A thermostat that automatically turns on the heating system at a specific time, such as activating heat at 8 PM every day.

2. Model-Based Reflex Agents

Model-based reflex agents build on simple reflex systems by combining current input with stored memory. They maintain an internal representation of their environment, which gets updated as new information is received. Their decisions are based on this internal model, along with predefined rules, past observations, and the current situation.

Unlike simple reflex agents, these systems can remember past data and function in environments that are not fully visible or that change over time. However, they still rely on fixed rules, which can limit their flexibility in more complex scenarios.

Example:

A robot vacuum cleaner that navigates around furniture while cleaning. It detects obstacles in real time and adjusts its path, while also remembering which areas have already been cleaned to avoid repeating the same spots.

3. Goal-Based Agents

Goal-based agents take intelligence a step further by combining an internal model of the environment with clearly defined objectives. Instead of reacting only to current conditions, these agents evaluate different possible actions and select the ones that help achieve their goal. They plan ahead by analyzing sequences of steps before taking action.

This ability to search and plan makes them more effective than both simple and model-based reflex agents, especially in scenarios that require decision-making and optimization.

Example:

A navigation system that suggests the fastest route to a destination. It analyzes multiple possible paths and continuously updates its recommendation if a quicker route becomes available, ensuring the goal is reached in the most efficient way.

4. Utility-Based Agents

Utility-based agents go beyond simply achieving a goal. They aim to choose the best possible outcome by evaluating different actions based on a defined measure of usefulness, known as a utility function. This function assigns a value to each possible scenario, reflecting how beneficial or desirable it is for the agent.

The evaluation can consider multiple factors, such as progress toward the goal, time taken, cost, or computational effort. The agent then compares these options and selects the action that delivers the highest overall value or satisfaction.

This approach is especially useful when several paths can achieve the same goal, but one option is clearly more efficient or advantageous than the others.

Example:

A navigation system that not only finds a route to your destination but also chooses the one that balances fuel efficiency, travel time, and toll costs, ensuring the most optimal journey overall.

5. Learning Agents

Learning agents are the most advanced type of AI agents. While they include the capabilities of other agent types, their key strength is the ability to learn from experience. They continuously update their knowledge base with new data, allowing them to adapt and perform better in unfamiliar or changing environments. These agents can operate using goal-based or utility-based approaches while improving over time.

A learning agent typically consists of four main components:

Learning: This part enables the agent to gain knowledge from its environment using inputs such as observations and sensor data.

Critic: The critic evaluates the agent’s performance and provides feedback on whether its actions meet the desired standards.

Performance Element: This component is responsible for choosing actions based on what the agent has learned.

Problem Generator” This module suggests new actions or strategies, encouraging exploration and helping the agent improve further.

Example:

Personalized recommendation systems used by e-commerce platforms. These agents monitor user behavior and preferences, store that data, and use it to suggest relevant products or services. As users continue interacting, the system learns and refines its recommendations, becoming more accurate over time.

Use Cases of AI Agents

AI agents are being applied across multiple industries, helping automate tasks, improve efficiency, and deliver more personalized experiences. Here are some key areas where they are making a significant impact:

Customer Experience

AI agents can be integrated into websites and applications to act as virtual assistants. They help users by answering questions, offering support, simulating interviews, and even assisting with mental well-being. With the availability of no-code tools, businesses can easily build and deploy these agents without advanced technical skills.

Healthcare

In healthcare, AI agents support a wide range of applications, especially when used in multi-agent systems. They can assist with treatment planning in emergency settings, streamline administrative processes, and manage medication workflows. This reduces the workload on medical staff, allowing them to focus on critical patient care.

Emergency Response

During natural disasters, AI agents can analyze data from social media platforms to identify individuals who may need help. By extracting and mapping location information, these systems enable faster and more effective rescue operations, ultimately saving lives in time-sensitive situations.

Finance and Supply Chain

AI agents are also widely used in finance and logistics. They can analyze real-time data, predict market trends, and optimize supply chain operations. Their ability to adapt to specific datasets allows for highly customized insights. However, when dealing with financial information, strong security and data privacy measures are essential.

Benefits of Using An AI Agents

AI agents offer several advantages that make them valuable across industries, especially as automation and intelligent systems continue to evolve.

Task Automation

With rapid progress in generative AI and machine learning, AI agents are playing a key role in automating complex workflows. They can handle tasks that would normally require significant human effort, allowing goals to be achieved faster, at lower cost, and on a larger scale. As a result, less manual guidance is needed, since agents can plan and execute tasks independently.

Improved Performance

Systems that use multiple AI agents often perform better than single-agent setups. This is because having multiple approaches and perspectives increases the chances of better decision-making. When agents collaborate and share insights, they can learn more effectively and refine their outputs through continuous feedback.

Additionally, agents that combine knowledge from specialized systems can produce more well-rounded results. This ability to work together and fill knowledge gaps is a key strength of agent-based frameworks and represents a major step forward in AI capabilities.

Higher Quality Responses

Compared to traditional AI models, AI agents can deliver more detailed, accurate, and personalized responses. This is largely due to their ability to use external tools, retain memory, and learn from interactions. By continuously updating their knowledge and adapting to user needs, they provide a more refined and user-focused experience.

Risks and Limitations of AI Agents

While AI agents offer powerful capabilities, they also come with challenges that must be carefully managed to ensure safe and reliable use.

Multi-Agent Dependencies

Complex tasks often require multiple AI agents working together. However, coordinating these systems can introduce risks. If several agents rely on the same underlying models, they may share the same weaknesses, which can lead to system-wide failures or make them more vulnerable to attacks. This makes strong data governance, proper training, and rigorous testing essential.

Infinite Feedback Loops

Although AI agents are designed to operate with minimal human input, this autonomy can sometimes lead to issues. If an agent fails to properly plan or evaluate its progress, it may repeatedly use the same tools without making progress, creating an endless loop. To prevent this, some level of human monitoring or safeguards is often necessary.

Computational Complexity

Developing AI agents from scratch can require significant time and computational resources. Training high-performing systems is resource-intensive, and depending on the task, agents may take a long time to complete their processes. This can increase both development costs and operational overhead.

Data Privacy and Security

When AI agents are integrated into business systems, they may handle sensitive data, which raises important security concerns. For example, allowing agents to manage tasks like software development or pricing decisions without oversight can lead to unintended or risky outcomes.

To address these concerns, organizations and AI providers such as IBM, Microsoft, and OpenAI must implement strong security measures. Ensuring proper data protection, controlled access, and responsible deployment is critical to maintaining trust and minimizing potential risks.

Best Practices for Using AI Agents

To ensure AI agents are effective, safe, and reliable, it’s important to follow a set of best practices during development and deployment.

Activity Logs

Providing access to detailed logs of agent actions helps improve transparency. These logs can show which tools were used, what decisions were made, and how different agents contributed to achieving a goal. This visibility allows users to track the decision-making process, identify potential errors, and build trust in the system.

Interruption Mechanisms

AI agents should not be allowed to run indefinitely without control. In cases like infinite loops, tool misuse, or system malfunctions, it’s important to have the ability to pause or stop operations. Implementing interruptibility allows users to safely halt an agent’s actions when needed.

However, deciding when to interrupt requires careful judgment. In certain situations, such as critical emergencies, stopping an agent abruptly might cause more harm than allowing it to continue operating.

Unique Agent Identifiers

Assigning unique identifiers to AI agents can help prevent misuse and improve accountability. These identifiers make it easier to trace the origin of an agent, including its developers, deployers, and users. This added layer of traceability supports responsible usage and helps address issues if something goes wrong.

Human Supervision

Human oversight is especially important during the early stages of an agent’s deployment. By monitoring performance and providing feedback, users can help the agent learn and adapt more effectively to expectations.

In addition, it is considered best practice to require human approval for high-impact actions. Tasks such as sending bulk communications or executing financial transactions should involve human confirmation. Maintaining oversight in sensitive areas helps reduce risks and ensures safer operation of AI systems.